|

5/7/2023 0 Comments Flatmap scala

scala> :t f1 Int > ListBoolean scala> List(2,3) flatMap f1.

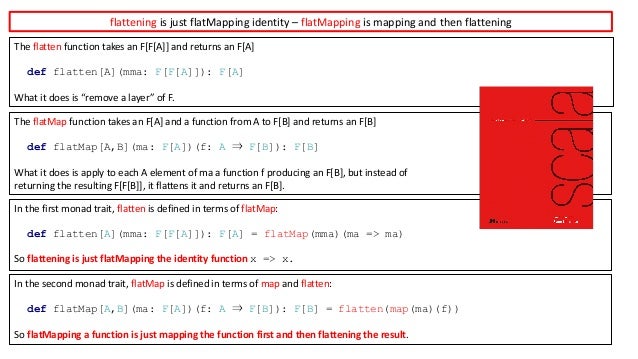

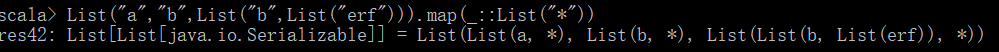

As you notice the input of the data frame has 3 records but after exploding the “language†using flatMap(), it returns 6 elements. The flatMap method takes a function as a parameter, applies it to each element. This yields below output after flatMap() transformation. The flatMap is also a member of the Traversable trait which is implemented by Scala’s collection classes. Val df2=df.flatMap(f=> f.getSeq(1).map((f.getString(0),_,f.getString(2)))) We can see from the above examples, flatMap allows us to transform the underlying sequence by applying a function that goes from one element to many elements. (arrayStructureData),arrayStructureSchema) add("languagesAtSchool", ArrayType(StringType)) Val arrayStructureSchema = new StructType() Val rdd=(data)įlatMap() on Spark DataFrame operates similar to RDD, when applied it executes the function specified on every element of the DataFrame by splitting or merging the elements hence, the result count of the flapMap() can be different. scala> rdd.flatMap(_.split(" ")).Val spark: SparkSession = SparkSession.builder()  When you do a count() on the result RDD, we will get 6 which is more than the number of elements in the initial RDD. flatMap() took care of “flattening†the output to plain Strings from Array Here with flatMap() we can see that the result RDD is not Array] as with map() it is Array.  Res1: Array = Array(Hadoop, In, Real, World, Big, Data) It is identical to a map () followed by a flat () of depth 1 ( arr.map (.args).flat () ), but slightly more efficient than calling those two methods separately. Let’s try the same split() operation with flatMap() rdd.flatMap(_.split(" ")).collect The flatMap () method returns a new array formed by applying a given callback function to each element of the array, and then flattening the result by one level. It can be defined as a blend of map method and flatten method. scala> rdd.map(_.split(" ")).countįlatMap() transforms an RDD with N elements to an RDD with potentially more than N elements. In Scala, flatMap() method is identical to the map() method, but the only difference is that in flatMap the inner grouping of an item is removed and a sequence is generated. Which is same as the count of the initial RDD before the split() transformation. Each Array has a list of words from the initial String. The result RDD also has 2 elements but now the type of the element in an Array and not a String as the initial RDD. Res0: Array] = Array(Array(Hadoop, In, Real, World), Array(Big, Data)) Split() function on this RDD, breaks the lines into words when it sees a space in between the words. Important thing to note is each element is transformed into another element there by the resultant RDD will have the same elements as before. map() transforms and RDD with N elements to RDD with N elements. The above rdd has 2 elements of type String. Rdd: .RDD = ParallelCollectionRDD at parallelize at :27 scala> val rdd = sc.parallelize(Seq("Hadoop In Real World", "Big Data")) Transformations with word count example in scala, before we start first. When applied on RDD, map and flatMap transform each element inside the rdd to something.Ĭonsider this simple RDD. Functions such as map(), mapPartition(), flatMap(), filter(), union() are.  Both map and flatMap functions are transformation functions.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed